Table of Contents

1 Motivation

2 Concept of Automated Testing IT Software Development

3 Methodology for Demonstration of Benefits

4 Defining Objectives and KPIs associated with Automated Testing

5 Risks and Opportunities

6 Costs and Benefits of Introducing Automated Tests

7 The Sensitivity of Benefits to Variance in KPIs

8 Summary

1 Motivation

Majority of industries, including information and communication technologies, are undergoing swift-evolution involving automation of various repetitive activities. Significant public attention received automation of manufacturing processes with the replacement of tedious, humans-performed processes by robots and automatic production lines in various configurations. Less visible, but perhaps even more drastic, is automation in corporate offices. Variety of roles dealing with “pushing the paper” around are replaced by information systems, so that repetitive verification and validation activities are gradually taken out of the labor market. Software development is a similar case as it requires extensive testing prior to its release to the end-users. The growing list of features for a pSW package in time means increasing time and labor required to test functionalities as such, alongside with executing the set of regression tests.

The presented paper aims at clearing the mist concerning hard benefits of introducing automated testing in software development. Additionally, a rigorous methodology for determining the benefits is presented to support the case of automated testing in the decision making process of key stakeholders. Alongside hard benefits impacting profit and loss statement, multiple soft benefits might be associated with automated testing with the ultimate impact on long term performance of the business entity.

2 Concept of Automated Testing IT Software Development

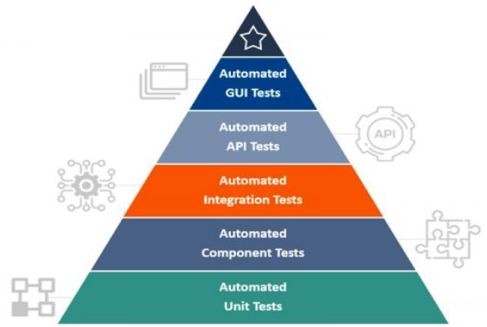

Testing in general aims at the comparison of actual outcomes versus predicted outcomes. Automated testing utilizes software separate from the actual software under validation to control the execution of testing. Automated testing is a critical enablement factor for the introduction of continuous integration and delivery. It spreads across multiple levels of the project: Core of automated testing lies in unit tests that validate whether units of code match the expected functional requirements. The vast majority of automated tests fall into this category, accounting approximately 70% of all tests. Another group of tests focuses on functionality SW components, such as user account creation, user authorization or order processing reflecting on a typical e-shop application. In turn, integration testing ensures the individual components interacts as intended, e.g. customer data are transferred properly through the entire order fulfillment process. Individual areas of testing form a pyramid as illustrated in Figure 1.

Figure 1: Pyramid of automated testing1]

1]Available at www.quateslab.com

Followed by API tests, the very peak of the pyramid is formed by GUI tests that have typically the least share in the total amount of automated test cases. Expensive to implement, GUI tests are susceptible to even slight modifications of GUI layout or functionalities.

To fulfill its purpose, the automated testing suite shall meet the following attributes:

- Ability to detect faults

- Tests ought to be easy to create

- Tests should be executable automatically

- Test results are reported out automatically

- The testing framework is integrated with other tools in the development and deployment chain

- Evaluation of results is performed by the team

Introduction of automated testing in SW development and deployment process needs to be supported by appropriate infrastructure and tools. Additionally, the testing framework provides an integrated system defining rules of automation.

3 Methodology for Demonstration of Benefits

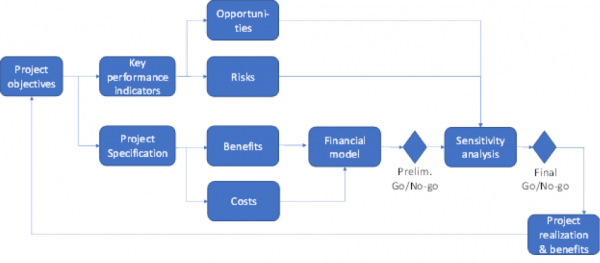

A wide array of inputs influence decision making whether to implement or to what extent, automated SW testing. A transparent methodology for determining benefits of automated testing, including associated risks and opportunities, is essential for qualified decision making. Figure 2 illustrates the workflow deployed in the assessment of automated testing for the purpose of allocating investments in the enterprise.

Figure 2: Workflow for decision making and evaluation of costs vs benefits associated with the introduction of automated testing

Based on projects objectives, the methodology steers the project team toward developing a set of KPIs for measuring the success of the projects. Both risks and opportunities might considerably influence the ability to achieve expected benefits. They represent valuable inputs to a sensitivity analysis meaning risks might reduce benefits, whilst opportunities might increase them. Thus decision-makers are presented a business case including not only the baseline scenario, but also a number of what-if scenarios taking into account the severity of risks, and magnitude of opportunities.

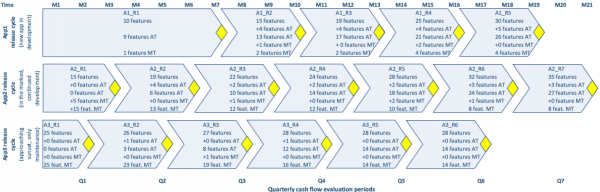

Benefits of introducing automated testing are going to be demonstrated at a representative release train consisting of three SW applications as outlined in Figure 3. As a baseline, only manual testing coverage is assumed, with the gradual introduction of automated testing when appropriate alongside with incremental additions of new functionalities. The representative company operates under three months release cycle with each application staggered by one month versus the other. Thus, the workload is evenly distributed not only for the testing team but also for customer support involved in enrollment of applications in service.

Figure 3: Representative release train at quarterly cadence for three applications with the gradual addition of functionalities. Amber chevrons indicate particular releases. Total number of features, number of new features added in Automated or Manual testing mode as well as a breakdown of features in Automated and Manual testing mode are captured in there. Features previously Manually tested are converted into Automated testing mode when beneficial.

The company has deployed in the field multiple applications in various stages of the product lifecycle:

- App#1: new application in development

- App#2: already in the market, but with continued development

- App#3: approaching its sunset with maintenance and upgrades only

Releases of applications are staggered so that every month only one application is released to allow redeployment of testing resources among applications in development.

To enable automated testing, common foundations need to be established, among others:

- Automated SW build process

- Testing environment

- Test management tool

- Test framework

It is apparent that the introduction of automated tests will be front-loaded by capital investments influencing cash flow and overall financial performance of the project.

4 Defining Objectives and KPIs associated with Automated Testing

Key performance indicators shall reflect on objectives and expected outcomes of a project. Whilst a particular set of KPIs might vary company per company, perhaps even on a project level, a generic set of KPIs associated with automated testing might include:

1. Increased Internal Rate of Return (IRR), shortened payback period and ability to generate positive NPV (Net Present Value) in association with investments into ICT project

1.1. Achieve IRR2 above the discount rate3]

1.2. Achieve payback period below two years4]

1.3. Generate positive NPV5]

2. Enhanced quality, e.g. prevention of defects leaking with the released application

2.1. Reduced leakage of large and small defects once automated testing introduced by at least 50%

3. Reproducibility of the automated testing framework across the entire application portfolio in production

3.1. When justification applies e.g. due to SW architecture, technologies deployed

____________

2]Internal Rate of Return is a discount rate that makes the net present value (NPV) of all cash flows from a particular project equal to zero.

3]The discount rate expresses the time value of money and can make the difference between whether an investment project is financially viable or not.

4]The payback period refers to the amount of time it takes to recover the cost of an investment.

5]Net present value (NPV) is the difference between the present value of cash inflows and the present value of cash outflows during the time period.

Supporting performance indicators support the KPIs proposed above:

- Elimination of a stabilization period (sprint in agile framework) ahead of actual SW release resulting in work effort reduction

- More flexibility in the timing of releases as well as the option of shortening the release cycle due to automating regression test sets

- Reduction of time spent on testing, both by developers and testers resulting in work effort reduction

- Prevention of releasing application patches post SW release as a result of large defects infiltrated into the released version

- The lesser workload of customer help desk due to prevention of customer issues reports through enhanced quality

- Greater bandwidth of corporate staff to develop novel products and functionalities through more effective SW development and deployment cycle

Objectives and success factors of a project set a foundation for developing a business case to justify the required investment, including the case of introducing automated SW testing. However, it’s important to consider constantly changing the socio-economic climate. The business case needs to be robust enough to cope with fluctuations in the hourly rate for key professions involved, to tackle lack of skilled workforce or ability to shorten the release cycle to be able to keep up the pace with the competition. Also, internal factors might step in, e.g. inability of the organization to deploy the required workforce to develop and sustain automated testing framework in its daily operations.

5 Risks and Opportunities

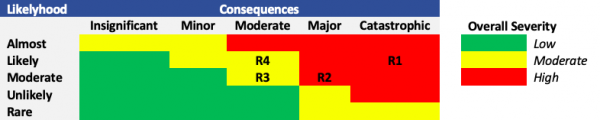

There are multiple risks associated with the introduction of automated testing into application development and deployment, which will be elaborated versus KPIs as defined in paragraph 4.

R1: IF architecture and utilized technologies in applications selected for testing automation are not suitable for it, THEN pace of automated testing deployment is slowed down, LEADING TO lower ROI and greater payback period behind investment into automated testing.

R2: IF the SW development and testing teams are not allocated to testing automation per initial assumptions, THEN pace of automated testing deployment is slowed down, LEADING TO lower ROI and greater payback period behind investment into automated testing.

R3: IF the SW development and testing teams are not skilled enough or trained up to grasp testing automation THEN coverage of requirements by automated tests might be insufficient LEADING TO automated tests not being able to prevent large defects leakages.

R4: IF the concept of automated testing lacks a technical owner and managerial support THEN the implementation of automated testing might be inconsistent across applications in the release train

LEADING TO KPIs being not met for all applications in the release train both in sense of defects leakage reduction and financial return on investment.

There is endless number of risks that might emerge in relation to automated testing introduction. Each of the risks might impact negatively fulfillment of KPIs that will be eventually used for evaluation of project success. Risks possessing significance might be visualized in terms of their distribution in graphical form per Figure 4 below to assist with the preparation of risk mitigation strategies.

Figure 4: Assessment of individual risks associated with introduction of automated.

Identified opportunities might result from developments in a market situation so as from the stability of certain components of affected software above what is assumed in the initial business case, namely:

O1: IF business team decides release cycle for particular applications needs to be accelerated, THEN savings from elimination of manual testing and stabilization sprints increases, LEADING TO further reduction of payback period in regard to investments into automated testing.

O2: IF GUI layout and associated design are predicted to remain stable, THEN there is an opportunity to automate GUI testing for affected applications, LEADING TO further spread of testing automation and improved ROI.

Further scenarios might occur, such as development and support of legacy applications not suitable for automated testing, is terminated. This would result in the inability to redeploy workforce on automated testing project, thus to accelerate its introduction into engineering operations and subsequent faster pace in spreading automated tests coverage. Identification of opportunities also represents a means of strengthening the case for automated testing whilst obtaining a buy-in from decision making player.

6 Costs and Benefits of Introducing Automated Tests

Deployment of automated testing does not represent a revenue-generating stream as such, however, its introduction results in measurable savings. The business case has been developed on a set of assumptions based on experience with multiple cases of implementing automated cases into routine operations.

There are upfront capital expenditure costs associated with required infrastructure setup as well as the development of a framework for automated testing. The business case assumes $10k for infrastructure setup, respectively $15k for development of automated testing framework for a medium-size company example.

Operational costs include two license fees for test management tool serving the testing team, assumed to accrue $500 per month.

The conventional process of testing a software relies on a series of regression tests to assure defects are detected and corrected ahead of SW release. With increasing complexity and number of functionalities, it’s becoming even more challenging to deploy enough testing resources as well to allocate the time required to perform the full set of regression testing. Likelihood of defect leakage into the production SW increases, which might result in additional releases encompassing required fixes. Cost of fixing defects alongside with their quantity plays a critical role in the business case behind automated tests. Two simplified scenarios were estimated for a case of a small defect and a large defect resulting in revisiting the architecture, and in developing additional requirements. Analysis of a problem statement precedes fixing the defects with following estimates of work effort:

- Analyze the problem: one man-day of a system engineer; two man-days of SW developer

- Fix a small defect: two man-days of a system engineer

- Fix a big defect: three days of a scrum team with seven members, resulting in 21 man-days

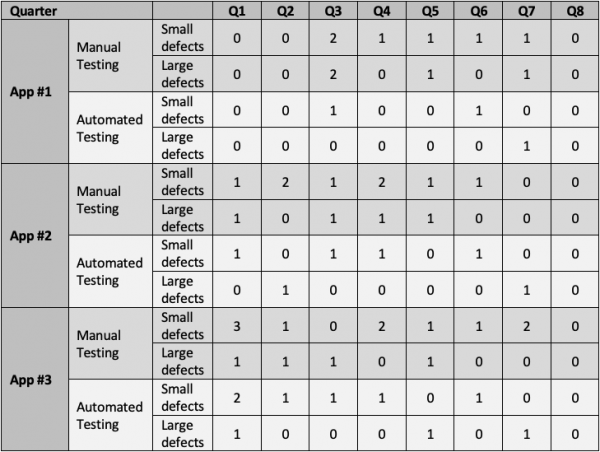

The illustrative scenario was developed for the release train depicted in Figure 3 in terms of a number of small and large defects detected through manual testing and once automated testing is introduced. Table 1 below specifies the number of defects detected and fixed per quarter.

Table 1: Summary of defects detected under manual and automated testing for apps in development in time.

According to Table 1, introducing automated testing helped to reduce the number of large defects leaked for App #1 to 25% or original value, whilst for App #2 to 50%, respectively to 33% in a case of App #3.

Alongside with savings due to the reduction of defects that propagate into released SW, there are cost avoidance savings due to reduction/elimination of regression testing. Let’s assume 10 test cases are required per feature on average. A tester needs to be allocated to develop a manual test case, to execute the manual test case and to adapt existing test case when functionalities are amended:

- cost to develop a MT test case corresponding to 20mins of tester’s time

- cost to adapt a MT test case corresponding to 20mins of tester’s time

- cost to run a MT test case corresponding to 10mins of tester’s time

Similar steps are involved in development, adaptation and running the automated tests.

- cost to develop an AT test case corresponding to 60mins of tester’s time

- cost to adapt an AT test case corresponding to 20mins of tester’s time

- cost to run an AT test case corresponding to 0mins of tester’s time

The cash flow generated through the introduction of automated testing per individual application equals to the difference between costs of manual testing and costs of testing in automated mode.

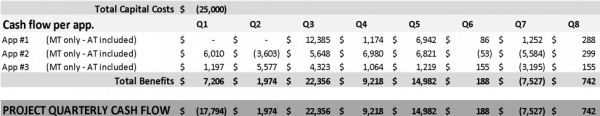

Table 2: Projected cash flow of introducing automated tests for three representative SW applications.

Assuming the fixed cost of capital at 10%, the project develops a net present value over $14k in the two-years evaluation period. Internal rate of return reaches 45% significantly exceeding the cost of capital.

The payback period for the investment is estimated below one year.

7 Sensitivity of Benefits to Variance in KPIs

The business case outlined in Table 2 assumes a set of fixed variables including costs of capital, labor costs, and ability to reduce the number of defects that propagate into released SW. Whilst cost of capital and labor costs are largely driven by the macroeconomic climate in the country, a number of defects in the released SW have more to do with intercompany factors such as maturity and capability of engineering teams as well as the ability to leverage advantages due to introduction of tests.

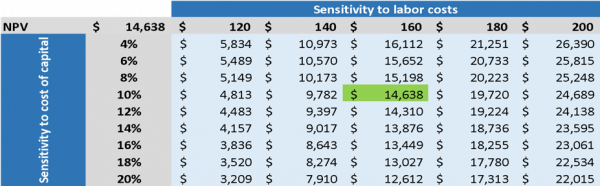

Table 3 below depicts the combined sensitivity of NPV to costs of capital ranging from 4% to 20%, and to labor costs for testing staff from $120 to $200 per man-day. The worst-case scenario would cost of capital at 20% with labor rates at $120 per man day. Lesser is the cost of testing staff, lesser are savings associated with the reduction of regression testing and defects elimination.

Table 3: Combined sensitivity of NPV to the cost of capital and labor costs.

It is apparent that the business case for automated testing is very robust to the external factors of labor costs and cost of capital, as it generates positive NPV even in the worst case.

Capability to minimize the number of defects released by automated tests is driven by an approach to the introduction of automated test framework, testing environment so as to the sequence of introducing tests according to the pyramid of testing in Figure 1. The sensitivity to a number of defects detected in released code reflects on risks R1 to R4 discussed in paragraph 5 of the paper.

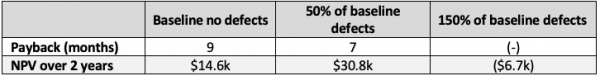

The baseline scenario considers spread of defects according to Table 1. The optimistic scenario considers 50% fewer defects, and the pessimistic 50% more defects than baseline propagated into released SW once automated tests are operational. Thus, the ability to eliminate defects has got a profound effect on the financial performance of the project as per Table 4 below.

Table 4: Sensitivity of NPV and payback period to the number of small and large defects discovered once automated testing is introduced with a baseline number of defects as per Table 1.

The optimistic scenario for defect elimination shortens the payback period as well as increases NPV generated over the two years assessment period. However, the pessimistic scenario results in negative NPV over the two years evaluation period, and the inability to repay the investment into automated testing of SW. The concept of automated testing is susceptible to the way it’s introduced, as it requires to follow the best industrial practices to ensure expected outcomes both in quality of released SW and impact on the financial performance of the company.

8 Summary

Introduction of automated software testing possesses a significant opportunity for companies to adjust to current fast-paced market conditions requiring frequent product enhancements. Although automated testing offers indirect benefits associated predominantly with labor costs, it can generate positive cash flow and offer appealing payback periods to support the approval process for required investment. However, automated testing needs to be introduced thoroughly according to state of the art practices to ensure expected outcomes. Otherwise expected benefits in terms of eliminating defects in production version and consequent savings in operations of SW teams might not materialize.

Methodology for assessment of automated testing presented in the paper is adjustable to suit the conditions and needs of a particular organization. Book your appointment with Radek Kitner and Erik Odvářka to find out, how the introduction of automated testing will improve profitability and operations of your business.

Leave A Comment